Most organizations today recognize the potential of AI to improve decision-making. Many have experimented with large language models (LLMs) and chatbot-style interfaces in the hope of unlocking value from their internal knowledge. Yet despite significant investment, the results have often fallen short. The gap is rarely enthusiasm or budget. It is that the underlying approach is not designed for how enterprise knowledge actually lives and changes over time.

A recent MIT study highlights what many teams are experiencing first-hand: while LLMs are powerful, most enterprise AI initiatives fail to deliver meaningful ROI. The core reason is simple. LLMs are not trained on the knowledge that matters most to your organization, the information locked inside your documents, systems, and experts. Even when tools can connect to internal content, they often struggle to represent nuance like exceptions, precedence, ownership, and the way teams really interpret policy in practice.

At Geminos, we built KnowledgeWay to solve this problem. It is designed to capture and structure organizational knowledge so it can be reused reliably, not just searched. KnowledgeWay turns internal knowledge into something AI systems and humans can work with consistently, even as content grows and changes.

The challenge: making enterprise knowledge usable by AI

LLMs like ChatGPT excel at general reasoning and natural language interaction, but they lack access to organization-specific knowledge. They don’t understand your contracts, operating procedures, engineering manuals, or the informal expertise held by subject matter experts. In practice, this shows up as answers that sound plausible but miss critical constraints, local terminology, or the “right way we do it here.”

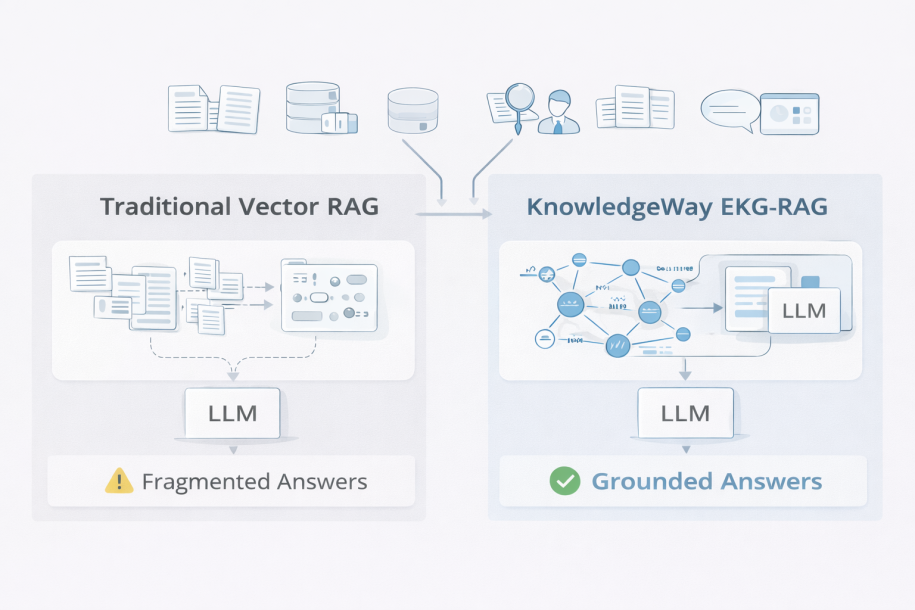

To address this, most teams have turned to Retrieval Augmented Generation (RAG). RAG systems ingest internal documents, retrieve relevant text in response to a query, and inject that text into an LLM’s context window so it can generate an answer grounded in internal data. This is a sensible starting point because it avoids retraining models and can be rolled out quickly, especially for narrow domains.

RAG is a step in the right direction, but at enterprise scale, today’s approaches break down. The larger and messier the document estate becomes, the more “retrieval” turns into a guessing game where the system returns something adjacent rather than what the user actually needs. And once the wrong snippets are selected, the LLM can only do so much to recover.

Why Vector RAG falls short (The most common RAG)

Most RAG implementations rely on vector databases. Documents are split into chunks, embedded into vectors, and retrieved using similarity search. That makes it easy to stand up an initial solution and it can work well when the question is straightforward and the relevant passage is clearly written in one place.

This works well for simple factual questions over small document collections. But as the corpus grows, several problems emerge:

- Loss of structure and intent: chunking strips away hierarchy, context, and precedence rules. A policy exception, a footnote, or a dependency on another document often gets separated from the thing it modifies.

- Poor cross-document reasoning: answers frequently depend on connecting concepts across multiple documents, teams, or versions. Similarity search is not designed to preserve those relationships.

- Errors amplified by chunking: the system might retrieve a near-match chunk that looks relevant but is subtly wrong for the situation. That error is then reinforced when the LLM generates a confident sounding response.

At scale, these issues turn into inconsistent answers, long prompt contexts, and a lot of manual work to improve retrieval quality. Worse, teams often find themselves rebuilding the same fixes again and again for new domains.

GraphRAG: an improvement, but not enough

GraphRAG introduces graph structures that connect entities, concepts, and document fragments. While this improves context and reasoning, most GraphRAG systems remain raw, hard to scale, and lack true ontological structure. In other words, you might get a web of entities, but not a durable model of how the organization defines and uses concepts.

This matters because enterprise knowledge is rarely just “facts.” It includes definitions, ownership, precedence, exceptions, and process logic. Without a structured ontology, you still end up with brittle outputs, heavy implementation effort, and limited ability for knowledge engineers to curate what the system believes.

A different approach: KnowledgeWay and EKG-RAG

KnowledgeWay moves beyond these limitations by creating true Enterprise Knowledge Graphs (EKGs). Using agent-driven workflows, KnowledgeWay builds and evolves ontologies dynamically, extracting entities, relationships, and supporting text from documents. Instead of treating knowledge as a pile of chunks, it treats it as a connected model that can be maintained over time.

The result is a human-readable, curatable knowledge graph that works seamlessly with LLMs. When a user asks a question, KnowledgeWay can provide the LLM with targeted context from the EKG, not just loosely related snippets. That helps the system stay aligned to organizational terminology and decision logic as content scales.

Knowledge graphs that improve over time

Knowledge engineers can curate the EKG, resolve synonyms, fix inconsistencies, and incorporate SME feedback. This is important because language inside organizations is messy: teams use different terms for the same thing, old procedures linger, and exceptions are often learned through experience rather than documentation. KnowledgeWay is built to surface those issues so they can be corrected rather than repeatedly rediscovered.

User ratings from the Q&A interface further guide continuous improvement. Low-rated answers become signals that something is missing, unclear, or mis-modeled in the graph, which helps prioritize curation work and focus on what actually impacts users.

KnowledgeWay Q&A

KnowledgeWay provides a familiar chatbot interface backed by the enterprise knowledge graph. Answers are more consistent, context-aware, and faithful to organizational reality because the system can ground responses in the EKG and supporting source material. This reduces the “it depends who you ask” problem that shows up in large enterprises.

The platform works with any public or private LLM, including models deployed behind your firewall. That means teams can standardize on KnowledgeWay’s knowledge layer while remaining flexible about which model they use today and which model they adopt later.

Software 3.0 in practice

KnowledgeWay exemplifies Software 3.0, applications driven by natural language, agents, and AI working in the background to guide users toward better outcomes. In practice, that means more than a chat front end. It means agent-driven workflows that help scope domains, build the knowledge structure, spot quality issues, and keep the system improving as new material arrives.

This approach also changes the operating model. Instead of constant prompt tuning and retrieval tweaking, teams can treat the EKG as a durable asset that can be curated, governed, and expanded across domains.

From knowledge to decisions

KnowledgeWay integrates with Geminos CauseWay to connect organizational knowledge with causal, data-driven decision-making, bridging intuitive understanding and rigorous analysis. KnowledgeWay helps teams capture how the organization thinks about a problem and what the accepted constraints are. CauseWay then quantifies what drives outcomes and what interventions are likely to change results.

Together, they support a more complete decision workflow: what we know, why it happens, what to change, and how to operationalize the learning.

Turning knowledge into a strategic asset

KnowledgeWay combines the strengths of RAG, knowledge graphs, and agent-driven AI to deliver faster time to value and measurable ROI. It is designed for real enterprise conditions: large document estates, evolving terminology, exceptions, and the need for consistency across teams.

If you want AI that understands your organization, not just the internet, KnowledgeWay is built for you.